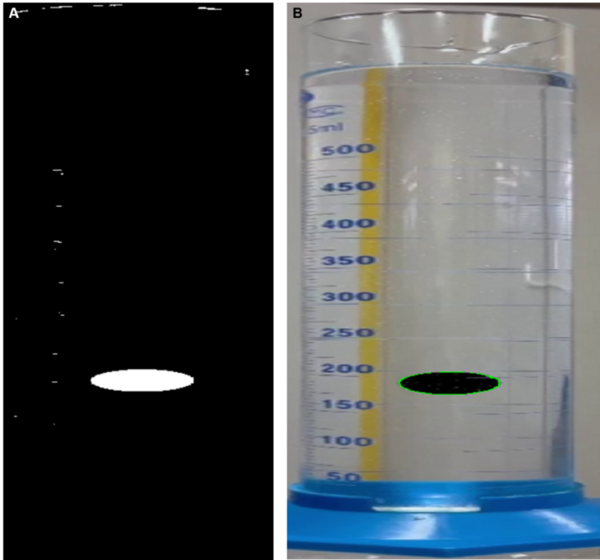

Here, recognizing the potential harmful effects of algal blooms, the authors used satellite images to detect algal blooms in water bodies in Wyoming based on their reflectance of near infrared light. They found that remote monitoring in this way may provide a useful tool in providing early warning and advisories to people who may live in close proximity.

Read More...