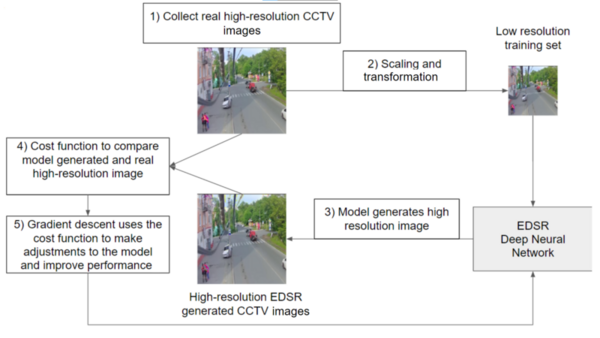

In this study, the authors hypothesized that closed-circuit television images could be stored with improved resolution by using enhanced deep residual (EDSR) networks.

Read More...Deep residual neural networks for increasing the resolution of CCTV images

In this study, the authors hypothesized that closed-circuit television images could be stored with improved resolution by using enhanced deep residual (EDSR) networks.

Read More...Comparing and evaluating ChatGPT’s performance giving financial advice with Reddit questions and answers

Here, the authors compared financial advice output by chat-GPT to actual Reddit comments from the "r/Financial Planning" subreddit. By assessing the model's response content, length, and advice they found that while artificial intelligence can deliver information, it failed in its delivery, clarity, and decisiveness.

Read More...Simple solving heuristics improve the accuracy of sudoku difficulty classifiers

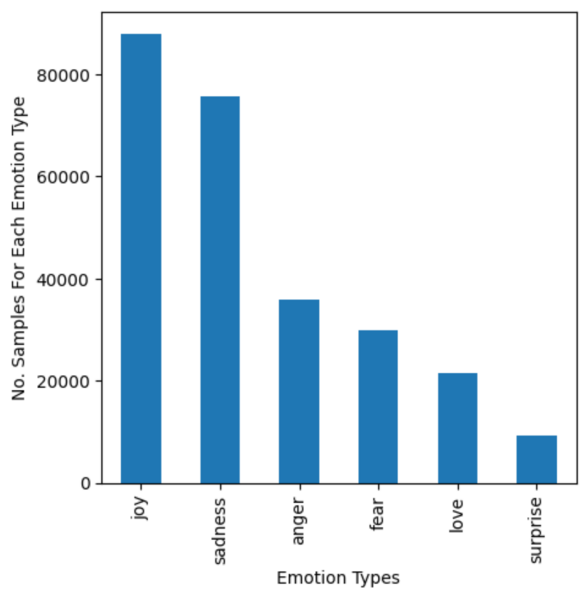

Training neural networks on text data to model human emotional understanding

The authors train a neural network to detect text-based emotions including joy, sadness, anger, fear, love, and surprise.

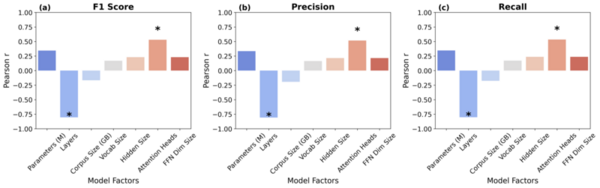

Read More...Evaluating key factors in emotion detection models for AI-driven personalized bibliotherapy

This study evaluates the potential of natural language processing (NLP) models in an emotion-driven bibliotherapy framework to improve mental health challenges.

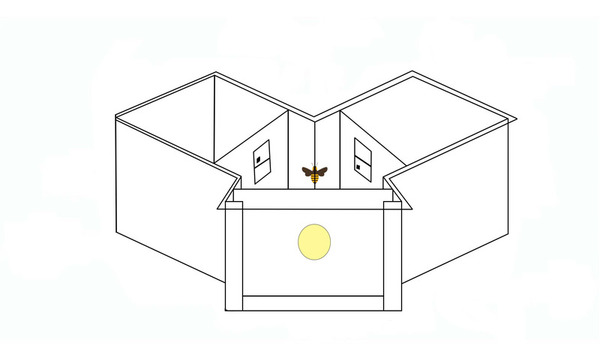

Read More...Identifying Neural Networks that Implement a Simple Spatial Concept

Modern artificial neural networks have been remarkably successful in various applications, from speech recognition to computer vision. However, it remains less clear whether they can implement abstract concepts, which are essential to generalization and understanding. To address this problem, the authors investigated the above vs. below task, a simple concept-based task that honeybees can solve, using a conventional neural network. They found that networks achieved 100% test accuracy when a visual target was presented below a black bar, however only 50% test accuracy when a visual target was presented below a reference shape.

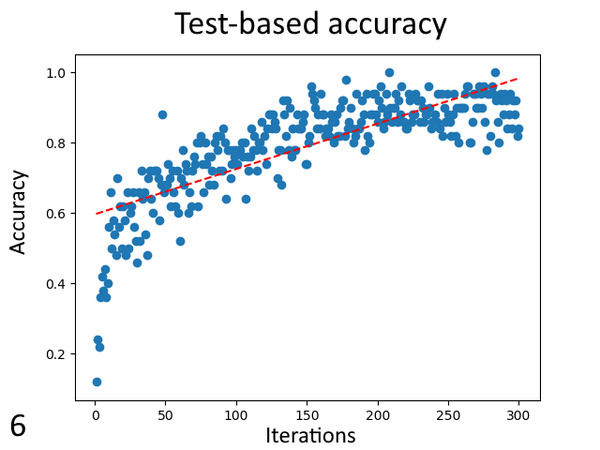

Read More...The Effect of Varying Training on Neural Network Weights and Visualizations

Neural networks are used throughout modern society to solve many problems commonly thought of as impossible for computers. Fountain and Rasmus designed a convolutional neural network and ran it with varying levels of training to see if consistent, accurate, and precise changes or patterns could be observed. They found that training introduced and strengthened patterns in the weights and visualizations, the patterns observed may not be consistent between all neural networks.

Read More...Class distinctions in automated domestic waste classification with a convolutional neural network

Domestic waste classification using convolutional neural network

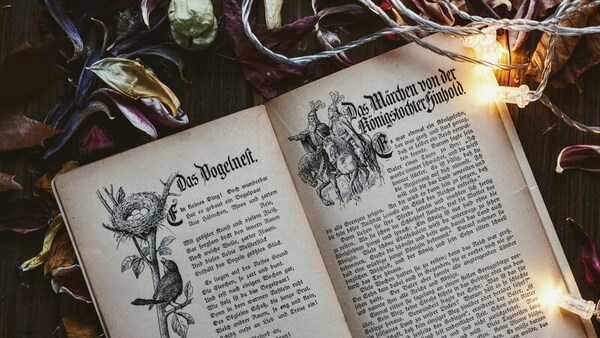

Read More...Part of speech distributions for Grimm versus artificially generated fairy tales

Here, the authors wanted to explore mathematical paradoxes in which there are multiple contradictory interpretations or analyses for a problem. They used ChatGPT to generate a novel dataset of fairy tales. They found statistical differences between the artificially generated text and human produced text based on the distribution of parts of speech elements.

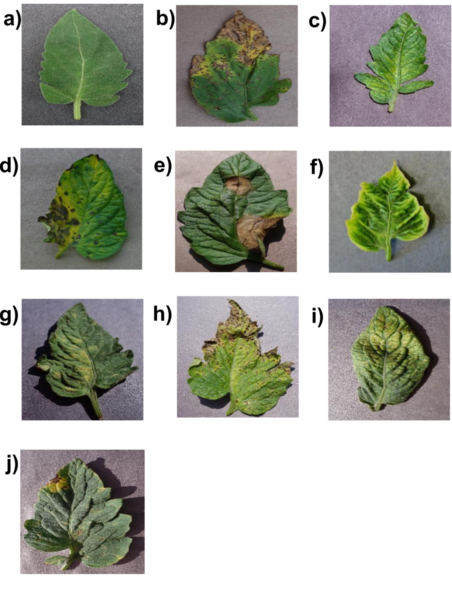

Read More...Tomato disease identification with shallow convolutional neural networks

Plant diseases can cause up to 50% crop yield loss for the popular tomato plant. A mobile device-based method to identify diseases from photos of symptomatic leaves via computer vision can be more effective due to its convenience and accessibility. To enable a practical mobile solution, a “shallow” convolutional neural networks (CNNs) with few layers, and thus low computational requirement but with high accuracy similar to the deep CNNs is needed. In this work, we explored if such a model was possible.

Read More...