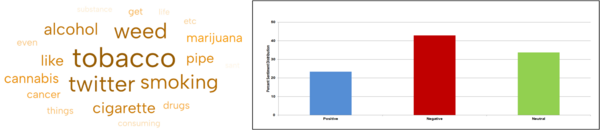

The authors used developed a machine learning tool for studying social media discourse surrounding use of tobacco and cannabis.

Read More...Using machine learning to understand social media discourse on the co-use of tobacco and cannabis

The authors used developed a machine learning tool for studying social media discourse surrounding use of tobacco and cannabis.

Read More...The effects of image manipulation on classification of cervical spondylosis X-ray images using deep learning

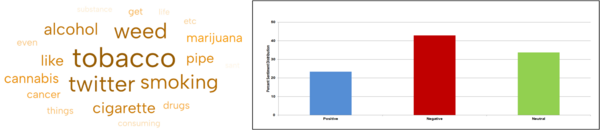

Drought prediction in the Midwestern United States using deep learning

The authors studied the ability of deep learning models to predict droughts in the midwestern United States.

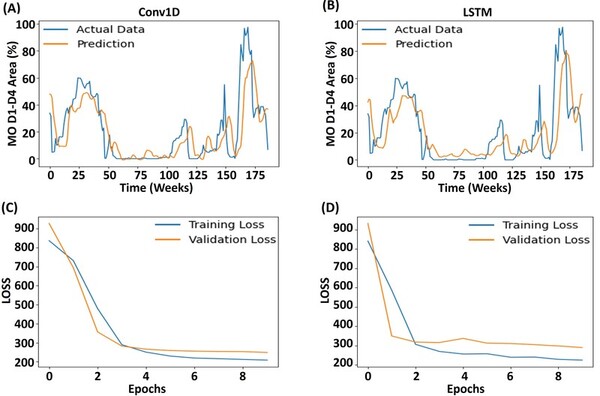

Read More...Impact of length of audio on music classification with deep learning

The authors looked at how the length of an audio clip used of a song impacted the ability to properly classify it by musical genre.

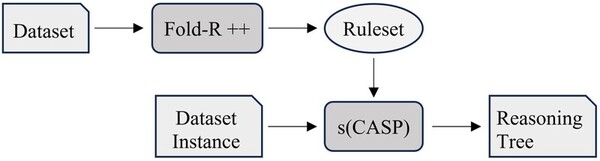

Read More...Explainable AI tools provide meaningful insight into rationale for prediction in machine learning models

The authors compare current machine learning algorithms with a new Explainable AI algorithm that produces a human-comprehensible decision tree alongside predictions.

Read More...Applying machine learning to breast cancer diagnosis: A high school student’s exploration using R

The authors combine fine needle aspiration biopsy and machine learning algorithms to develop a breast cancer detection method suitable for resource-constrained regions that lack access to mammograms.

Read More...Deep dive into predicting insurance premiums using machine learning

The authors looked at different factors, such as age, pre-existing conditions, and geographic region, and their ability to predict what an individual's health insurance premium would be.

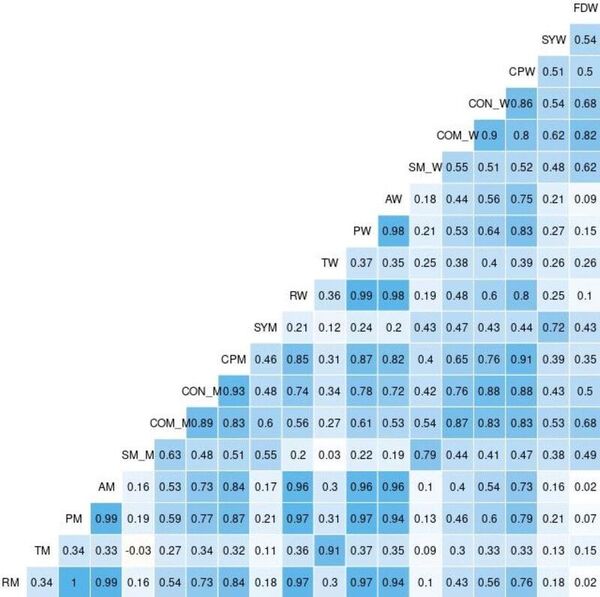

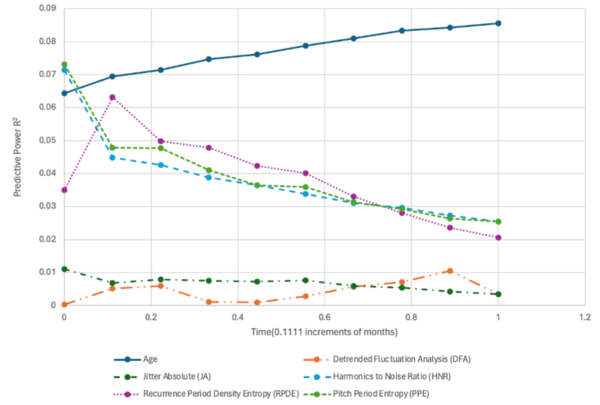

Read More...Using advanced machine learning and voice analysis features for Parkinson’s disease progression prediction

The authors looked at the ability to use audio clips to analyze the progression of Parkinson's disease.

Read More...Using two-step machine learning to predict harmful algal bloom risk

Using machine learning to predict the risk of algae bloom

Read More...Relationship between p62 and learning behavior in male and female mice deficient in hippocampal folliculin

Here the authors hypothesized that reducing folliculin (FLCN) might affect p62 protein levels in the dorsal hippocampus of mice, given their potential functional connection and p62's role in neurodegenerative diseases. Their study, using western blots and a two-way ANOVA on young wild-type mice, found that p62 levels correlated with FLCN expression, but ultimately concluded there's no evidence of a functional connection between FLCN and p62 in this specific model.

Read More...