Modeling and optimization of epidemiological control policies through reinforcement learning

(1) Chatham High School, Chatham, New Jersey

https://doi.org/10.59720/22-157

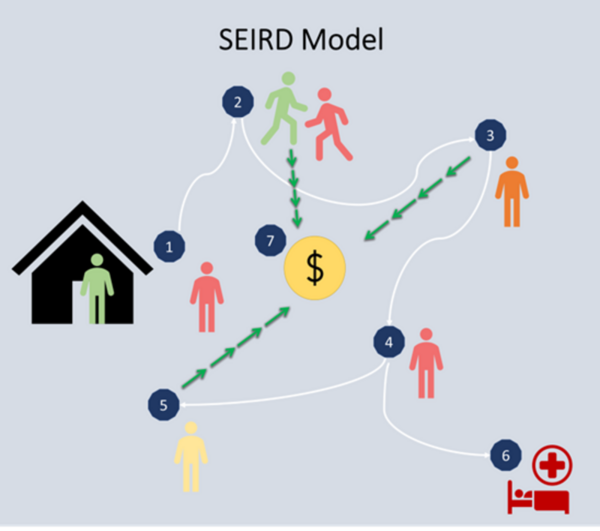

Pandemics involve the high transmission of a disease that impacts global and local health and economic patterns. The impact of a pandemic can be minimized by enforcing certain restrictions on a community. However, while minimizing infection and death rates, these restrictions can also lead to economic crises. Epidemiological models help propose pandemic control strategies based on non-pharmaceutical interventions such as social distancing, curfews, and lockdowns, reducing the economic impact of these restrictions. However, designing manual control strategies while considering disease spread and economic status is non-trivial. Optimal strategies can be designed through multi-objective reinforcement learning (MORL) models, which demonstrate how restrictions can be used to optimize the outcome of a pandemic. In this research, we utilized an epidemiological Susceptible, Exposed, Infected, Recovered, Deceased (SEIRD) model – a compartmental model for virtually simulating a pandemic day by day. We combined the SEIRD model with a deep double recurrent Q-network to train a reinforcement learning agent to enforce the optimal restriction on the SEIRD simulation based on a reward function. We tested two agents with unique reward functions and pandemic goals to obtain two strategies. The first agent placed long lockdowns to reduce the initial spread of the disease, followed by cyclical and shorter lockdowns to mitigate the resurgence of the disease. The second agent provided similar infection rates but an improved economy by implementing a 10-day lockdown and 20-day no-restriction cycle. This use of reinforcement learning and epidemiological modeling allowed for both economic and infection mitigation in multiple pandemic scenarios.

This article has been tagged with: